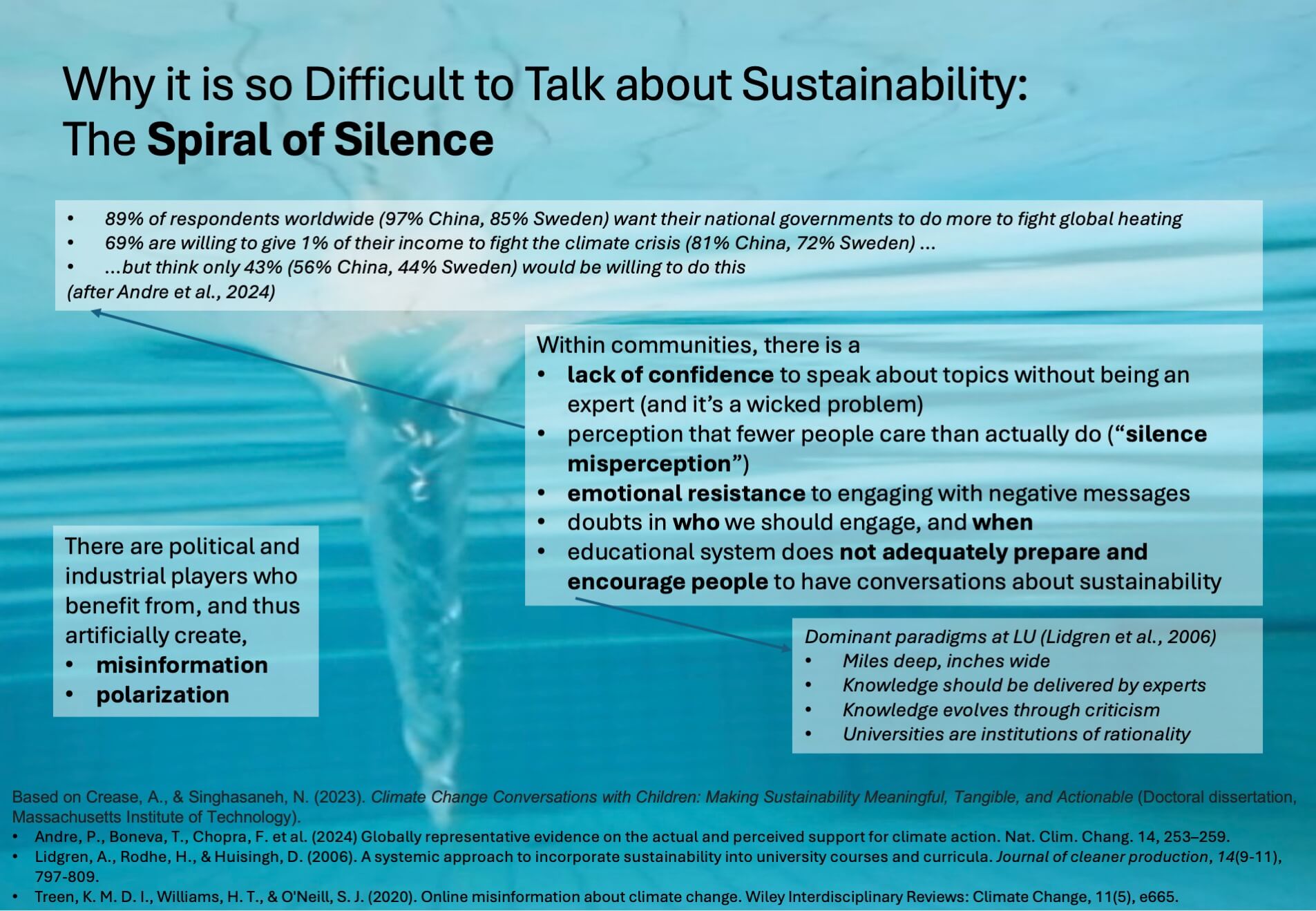

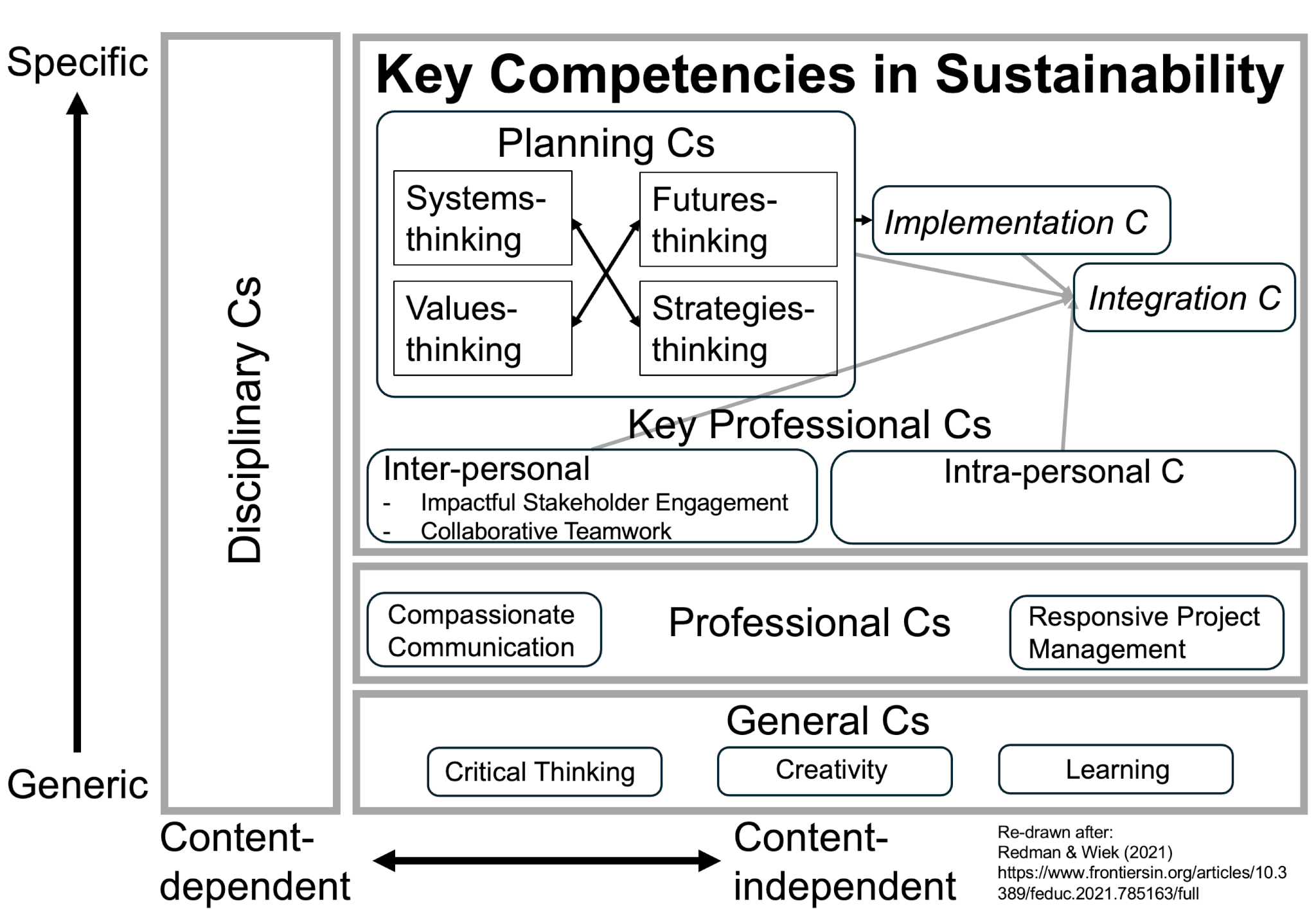

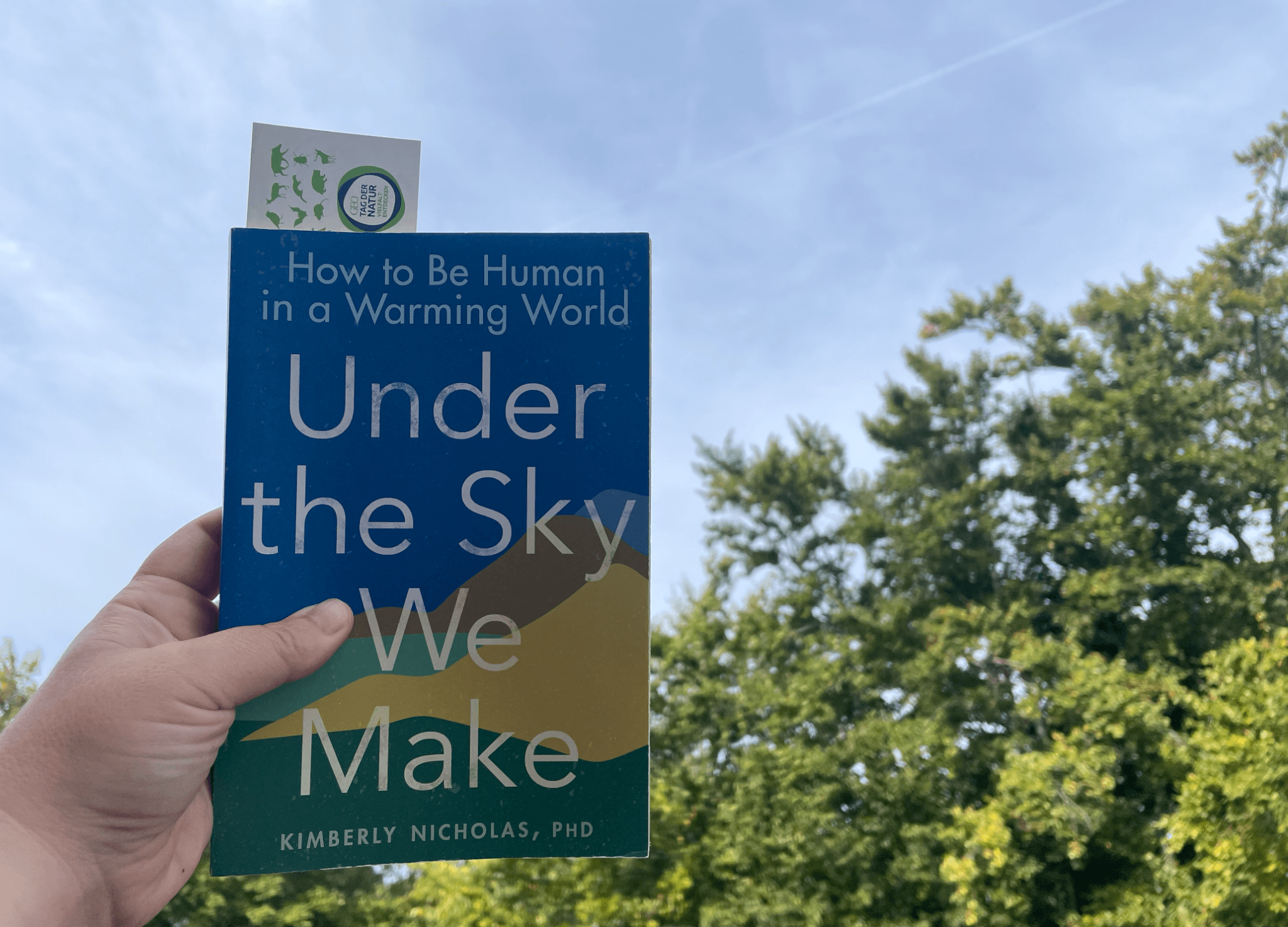

Currently reading Kukowski et al. (2026) on “Leveraging Agency for Climate Change Mitigation”

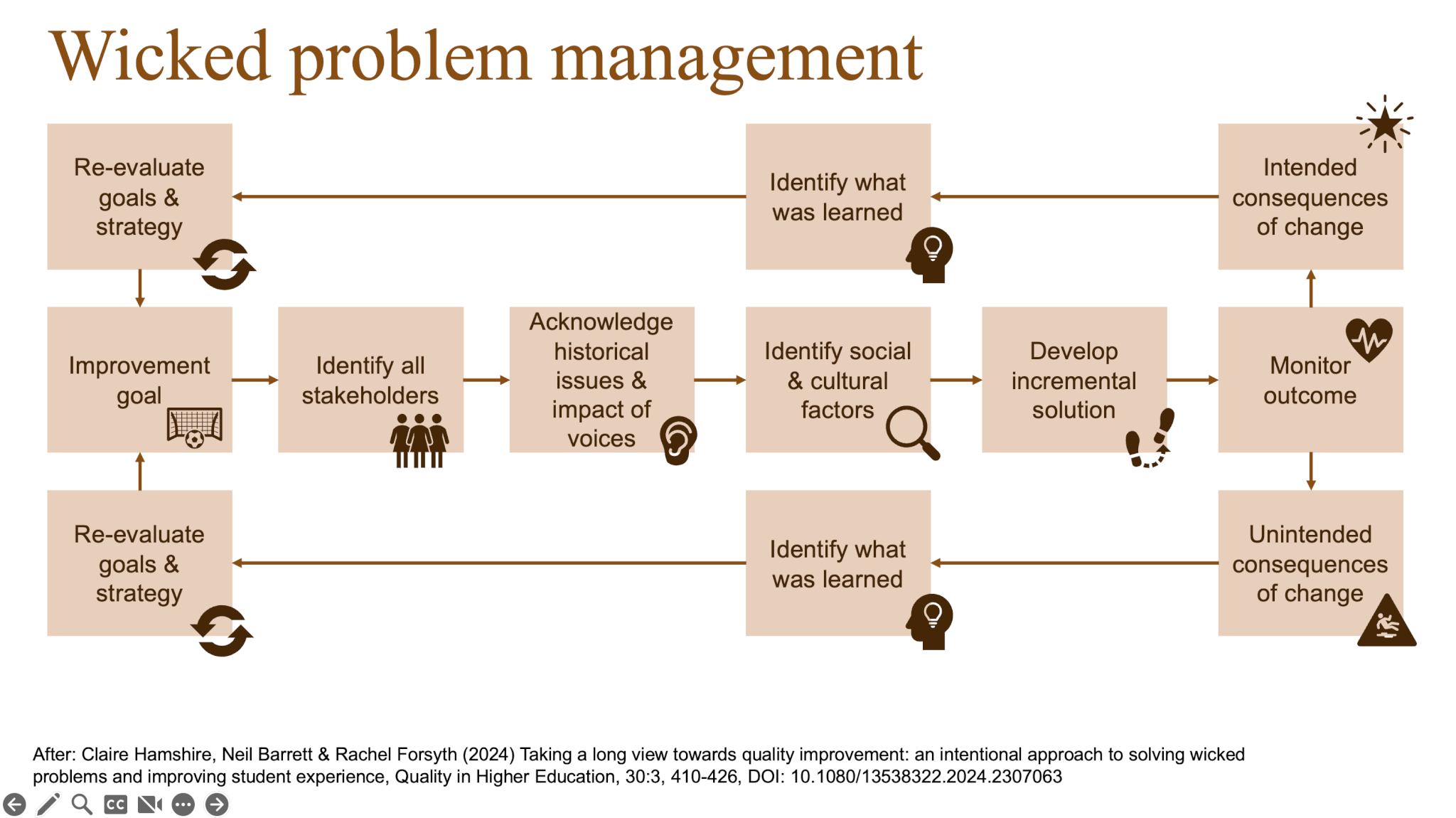

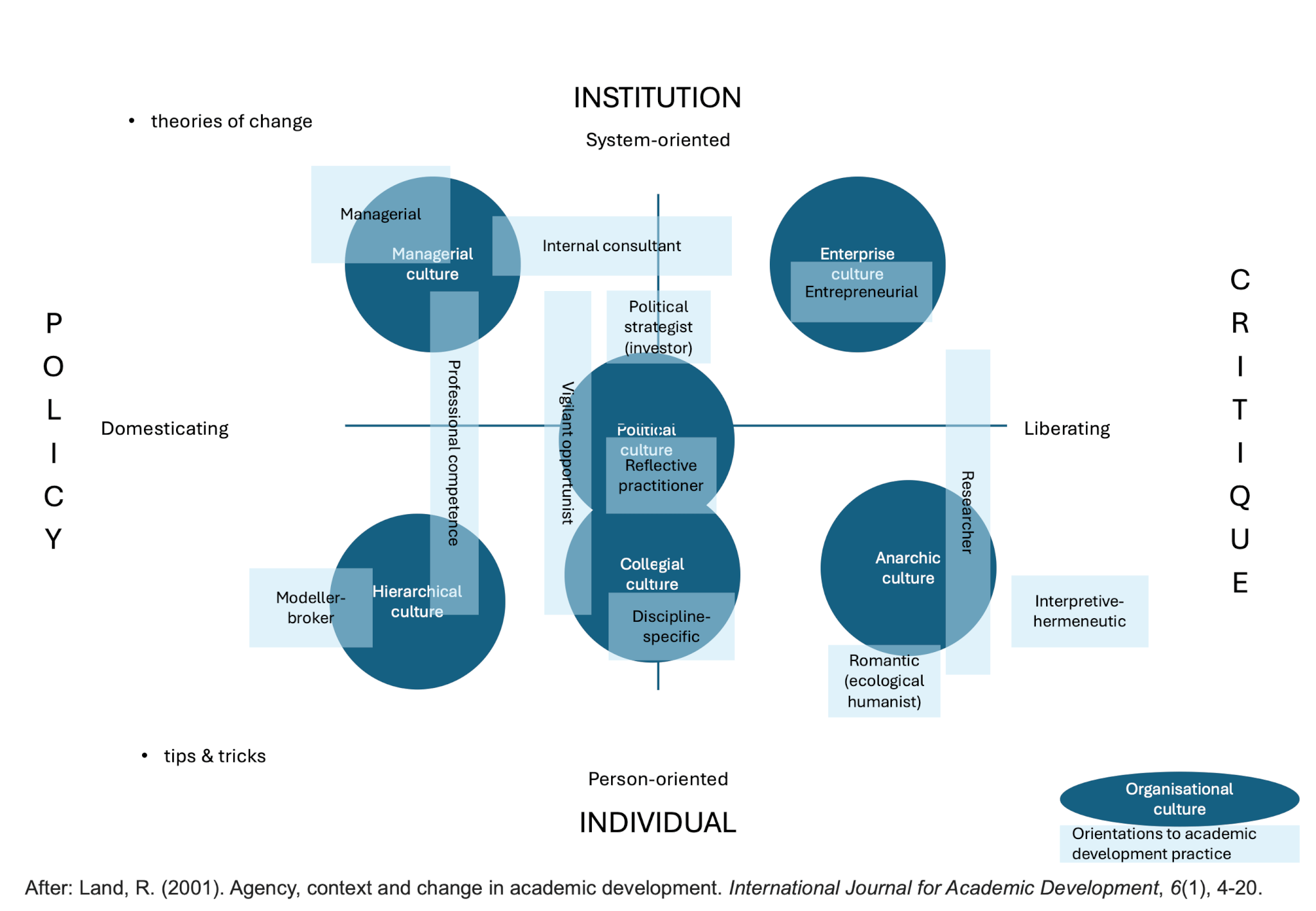

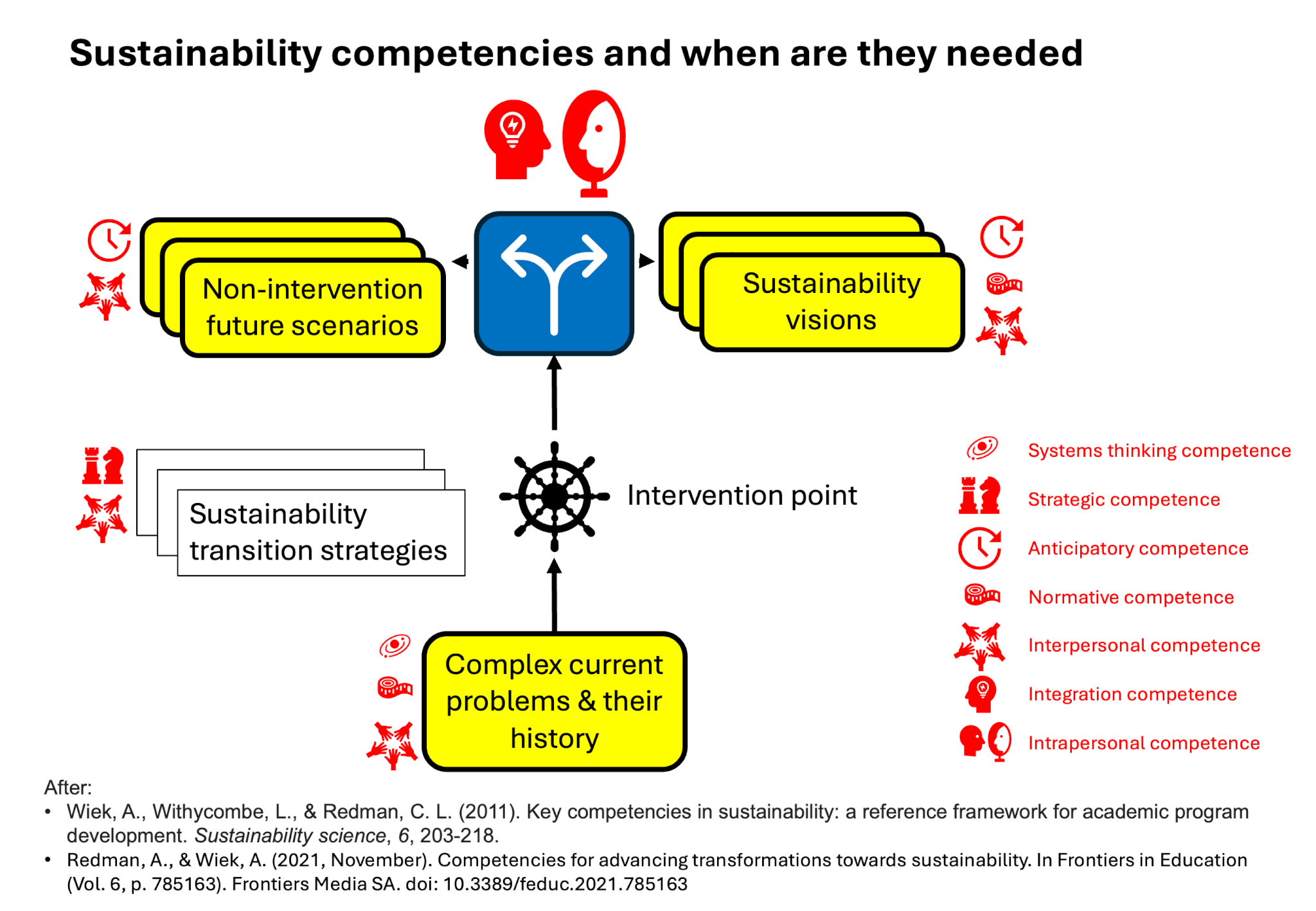

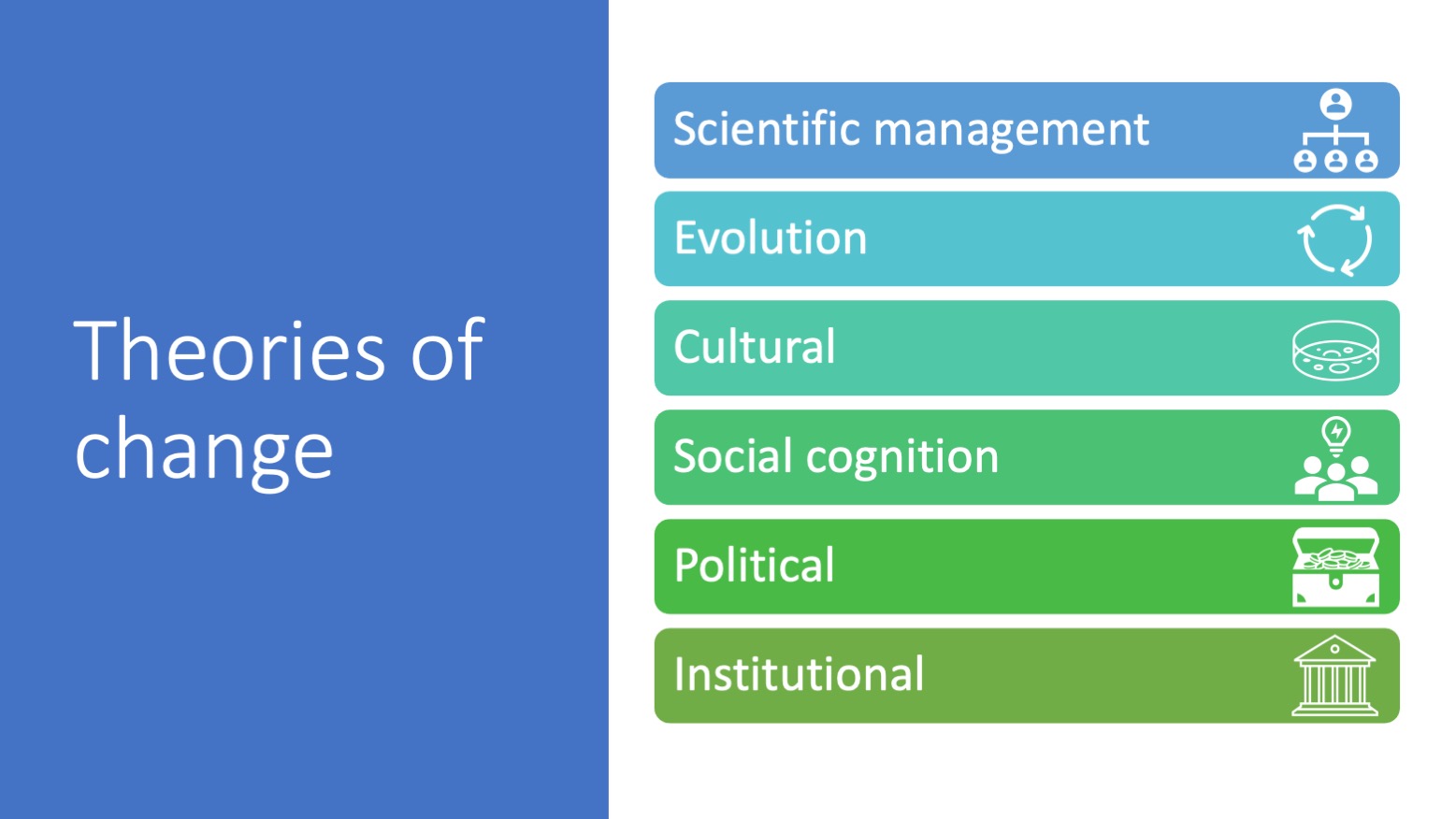

Just read this (3-page only, so check it out yourself!) article and think that it is super helpful! While we often talk about climate action as either an individual problem OR a structural problem, as behavior change OR technological solutions; in this paper it is clear that we need AND CAN DO all of the […]