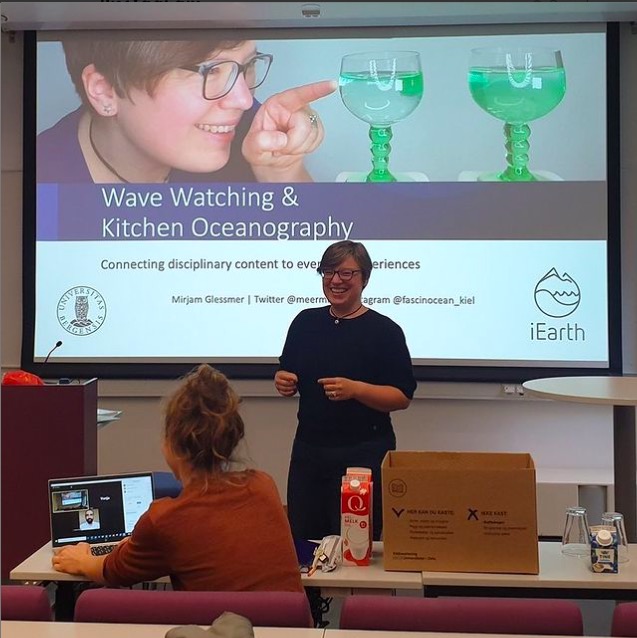

Recently published: “Adapting a Teaching Method to Fit Purpose and Context” (Glessmer, Bovill & Daae; 2024)

New article published! “Adapting a Teaching Method to Fit Purpose and Context” (Glessmer, Bovill & Daae; 2024), based on this blogpost, but a little more thought through and polished with Cathy and Kjersti in beautiful Voss! Check it out here, and enjoy!