A collection of methods for facilitating coaching of sustainability initiatives by Wandercoaching

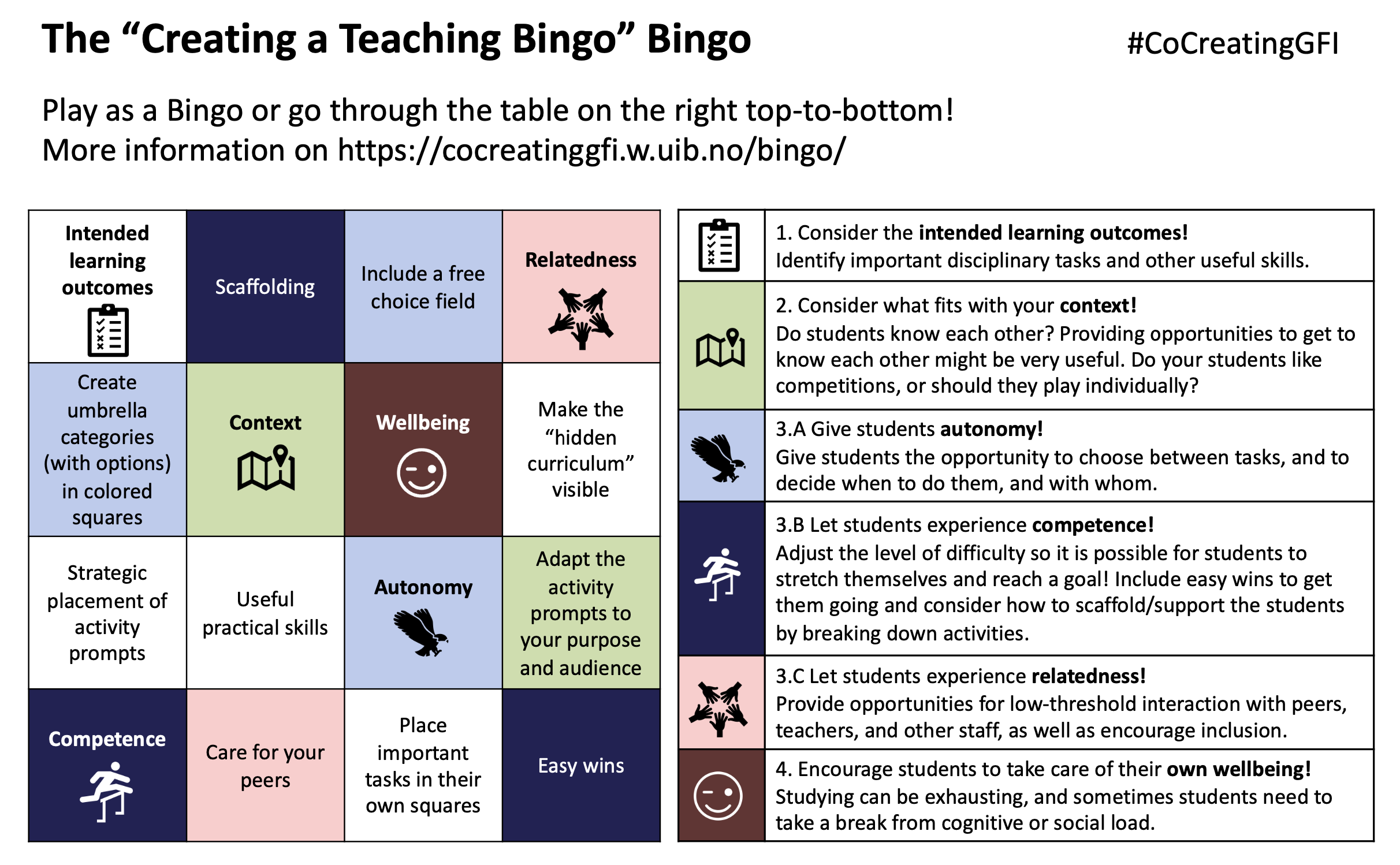

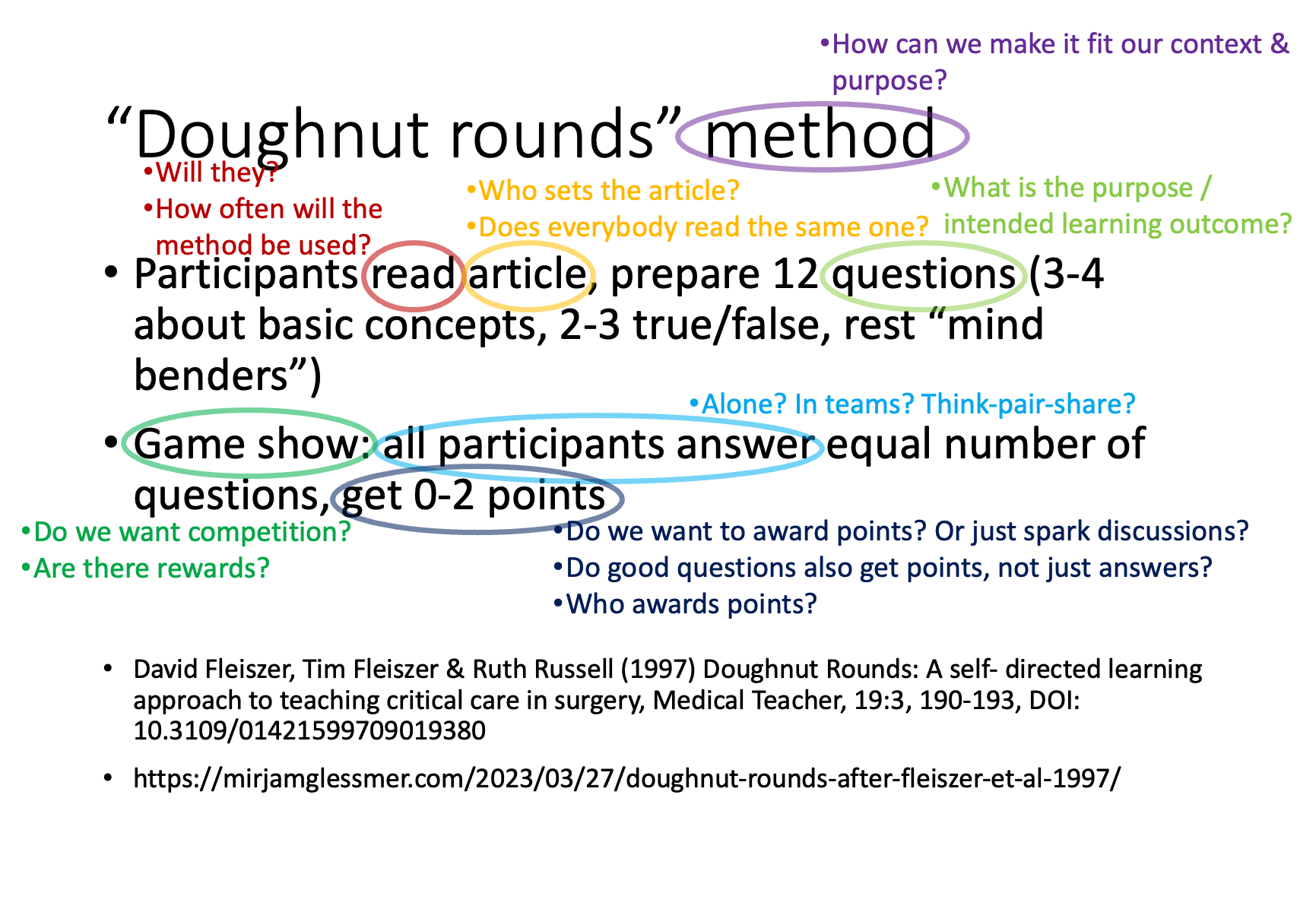

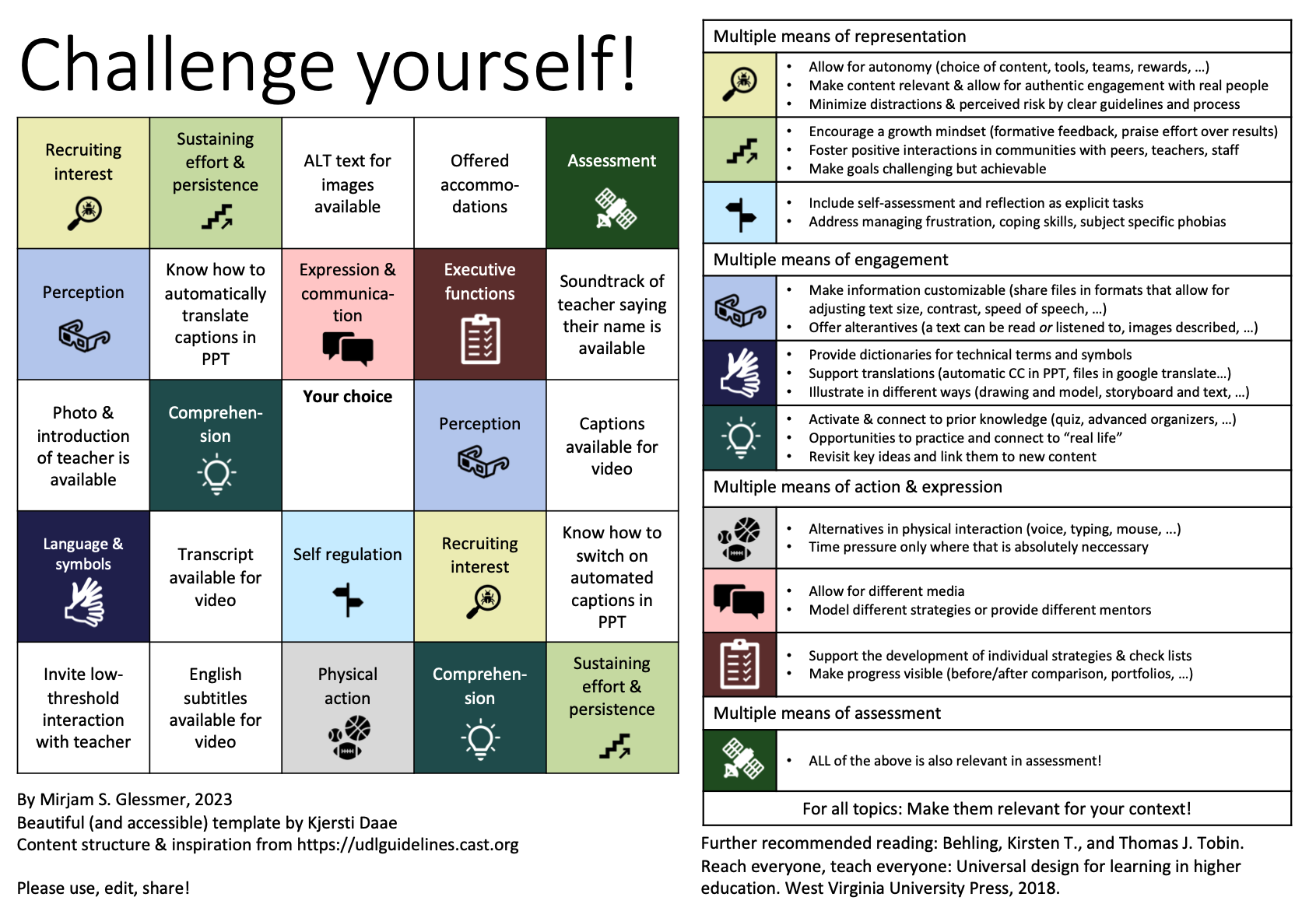

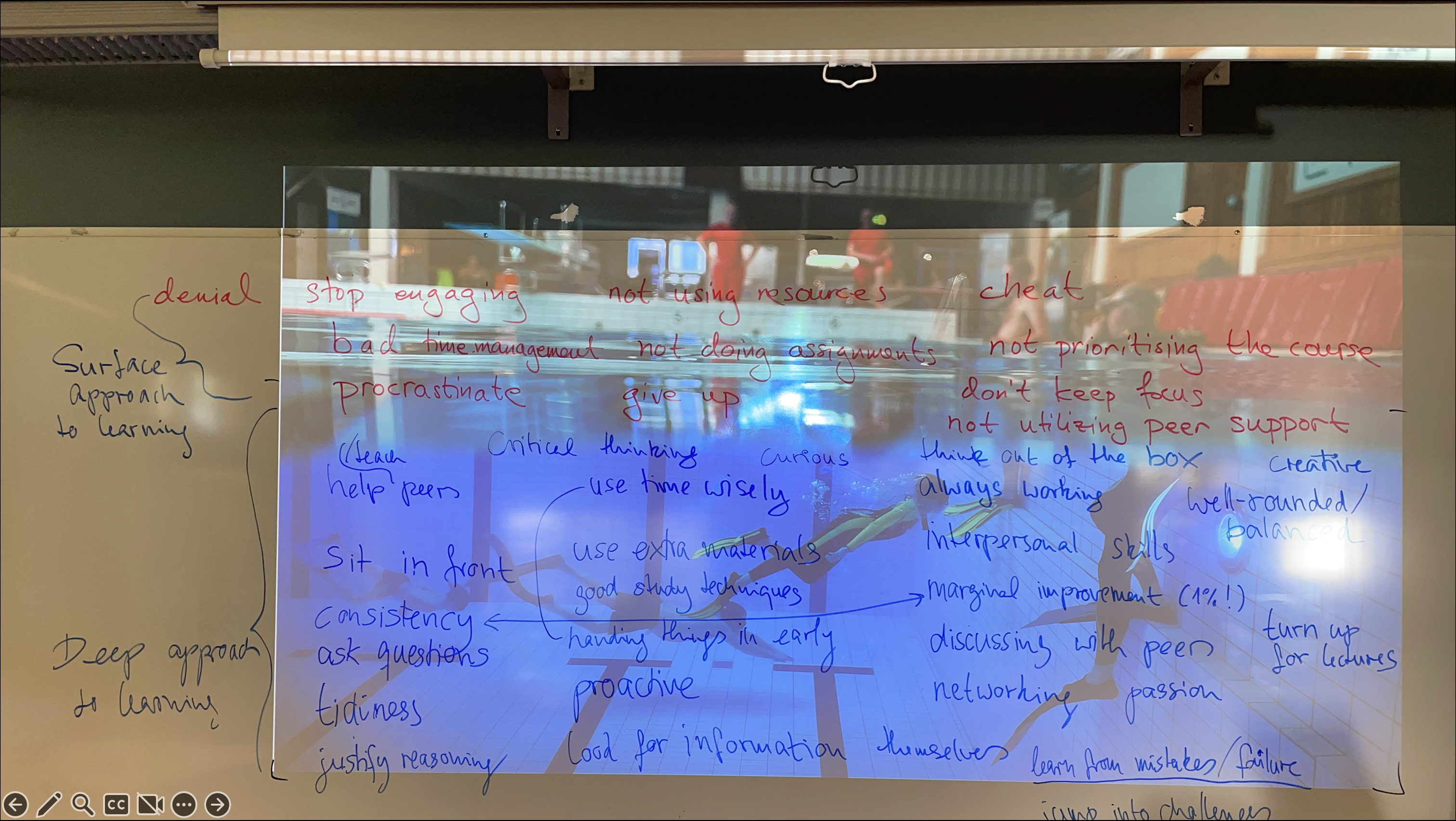

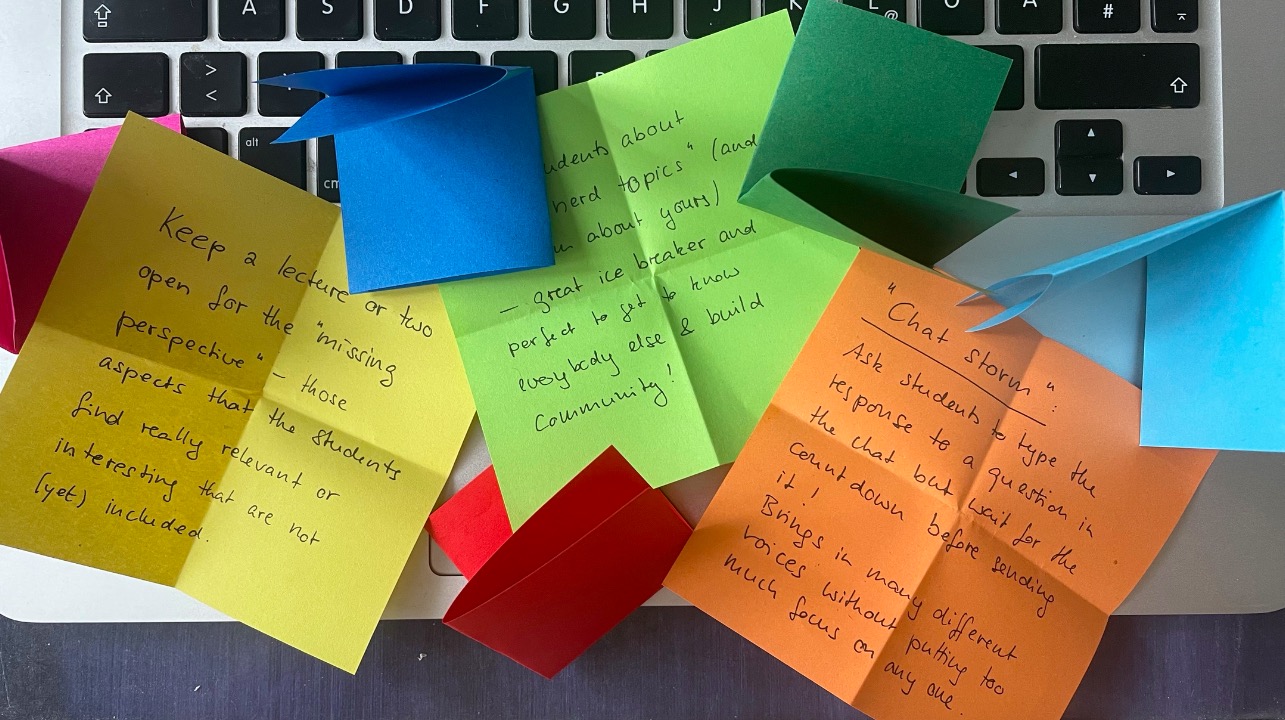

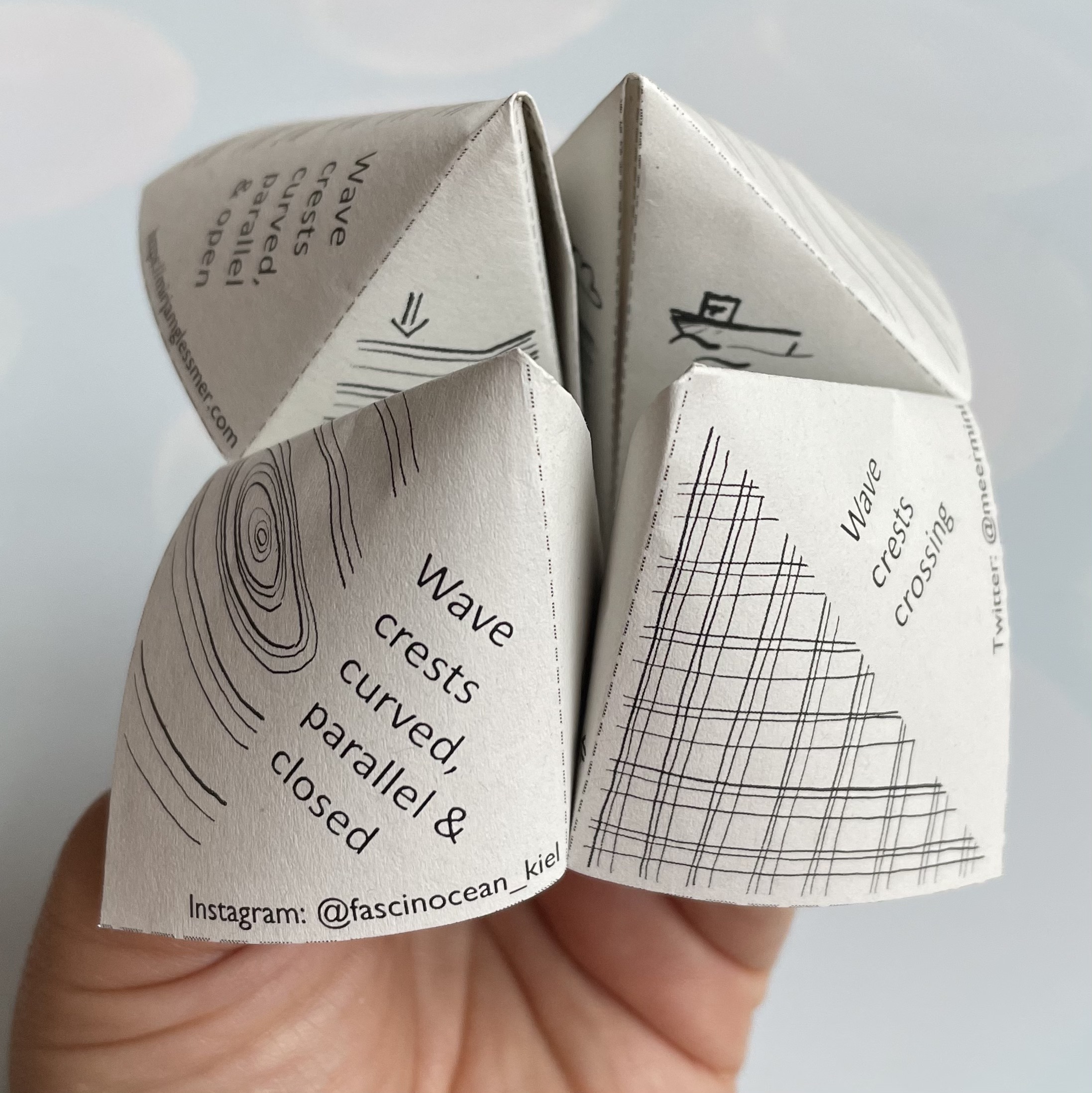

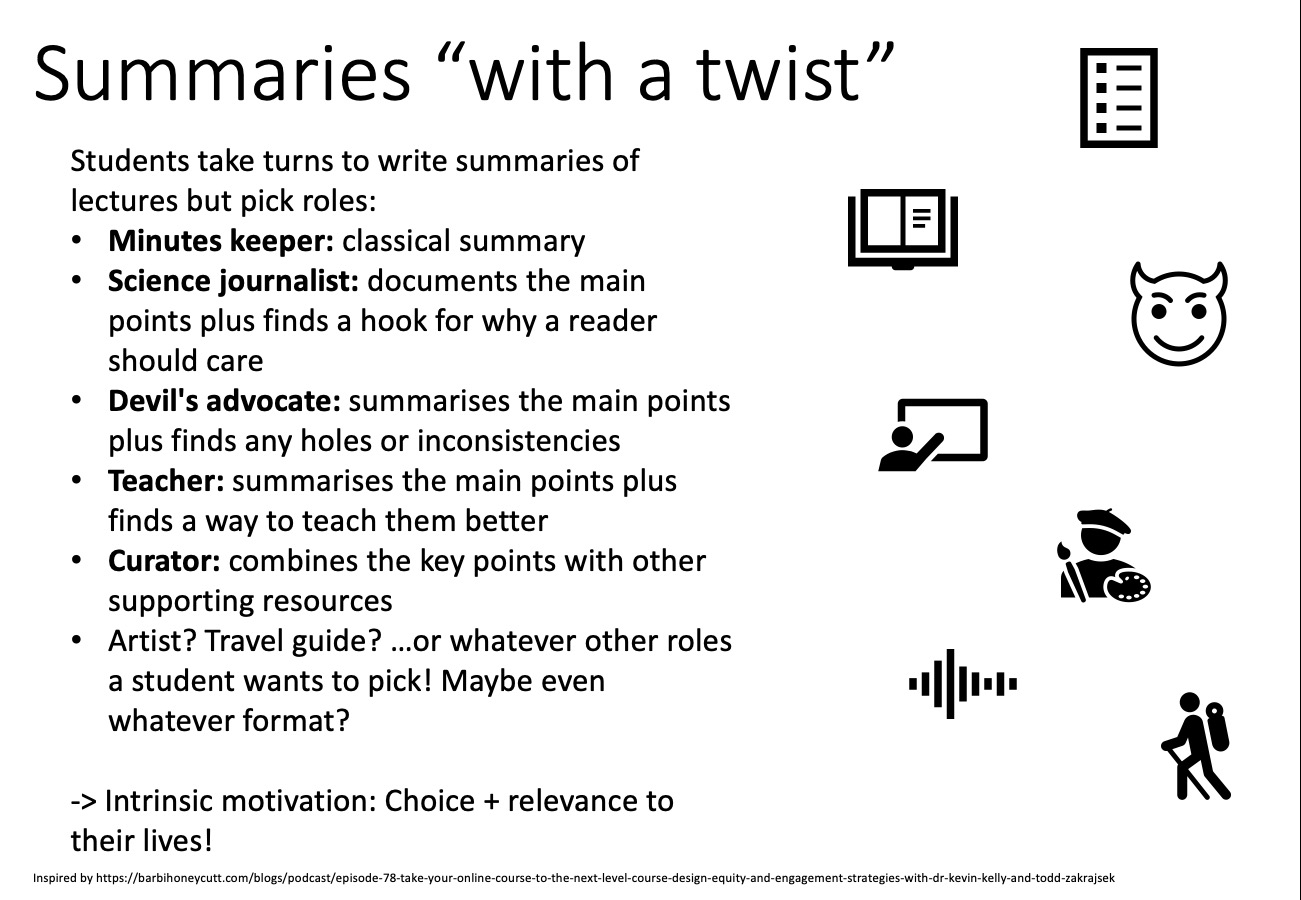

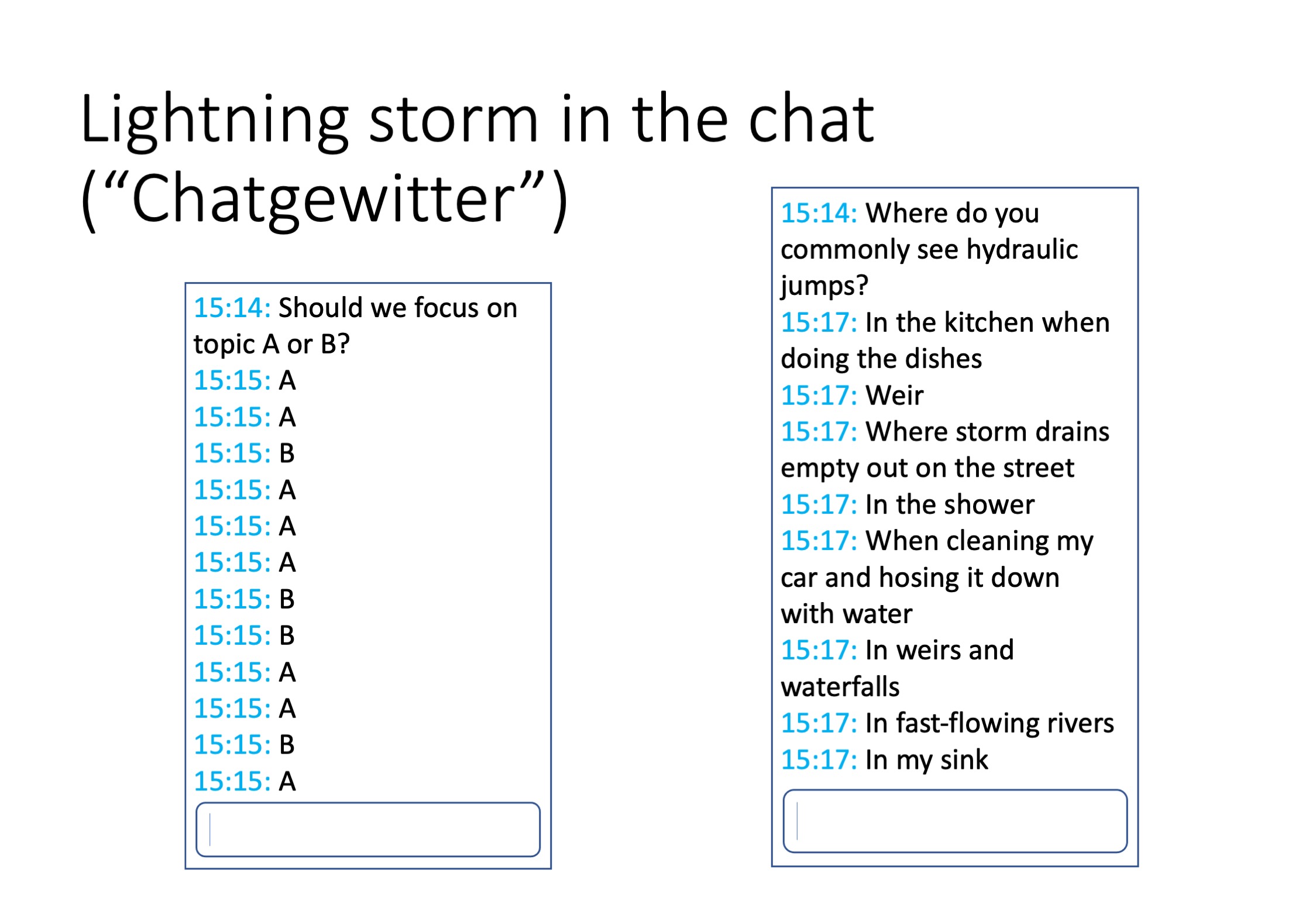

In the book “The Psychology of Collective Climate Action: Building Climate Courage” by Hamann et al. (2025), I came across Wandercoaching, a peer-coaching initiative for sustainability initiatives at universities. They share the materials they use here, and I really liked the “tools for your sustainable university” guide full of different methods — many of them […]