Tag: assessment

Currently reading Zeivots et al. (2026) on “Assessment design through co-design: reimagining assessment design practices in higher education”

When browsing Zeivots et al. (2026)’s article “Assessment design through co-design: reimagining assessment design practices in higher education“, one sentence caught my eye: “Students could see how their input mattered without the expectation that every suggestion would be enacted“. Since I am currently really interested in partnership and negotiations there, I then had to read […]

Currently reading: Emerson (2026) on ““It Honestly Made Me Want to Work Harder”: Student Evaluation of Using Ungrading in an Online Asynchronous Course”

As I am thinking more and more about the details of our upcoming MOOC on “Teaching for Sustainability”, I am less and less convinced that I actually want to have automated certification at the end. So Emerson (2026) on ““It Honestly Made Me Want to Work Harder”: Student Evaluation of Using Ungrading in an Online Asynchronous […]

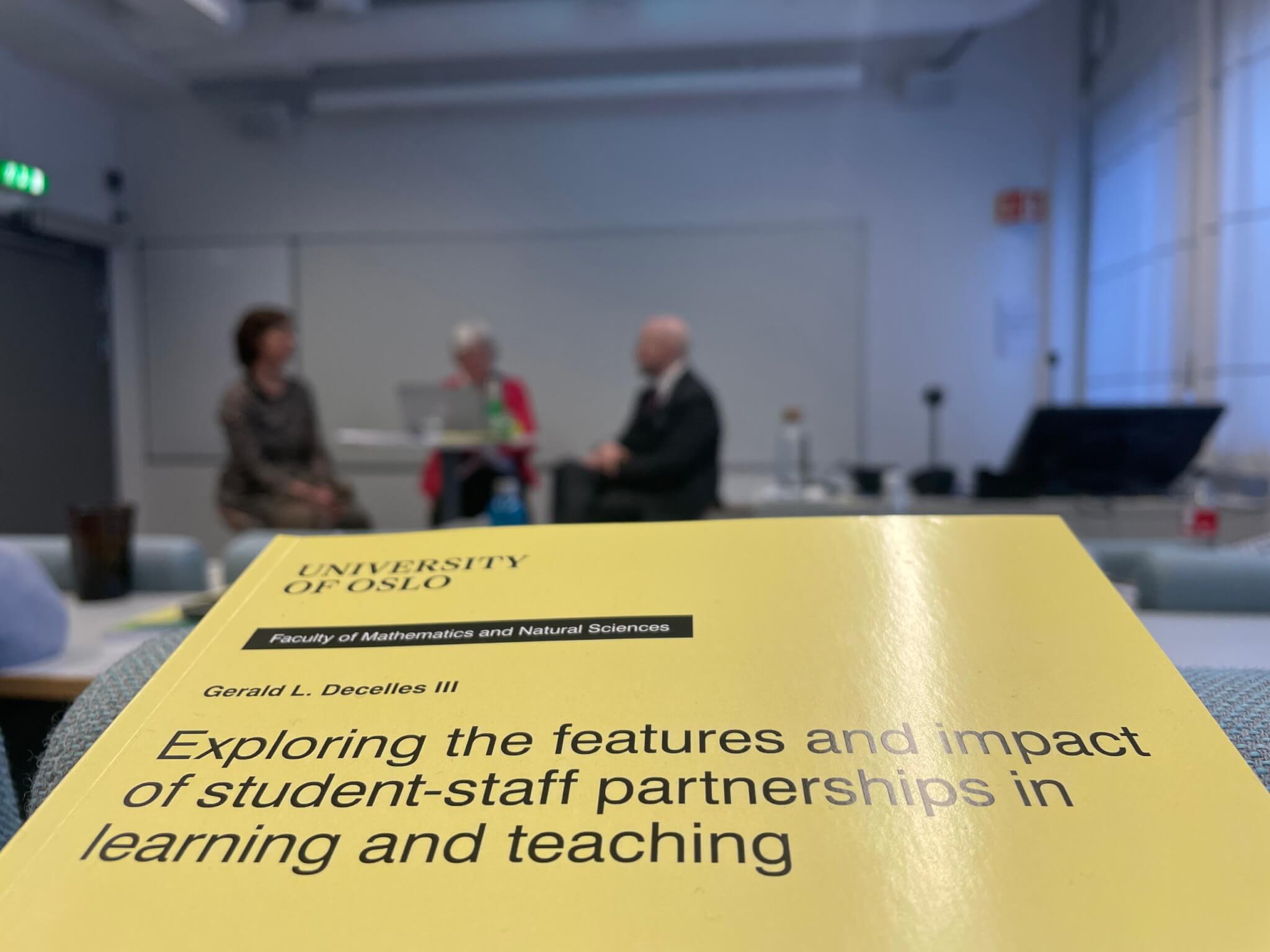

Notes from Gerald Decelles III’s trial lecture & PhD defense

Last week, I had a great day listening first to the trial lecture and then the defense of Gerald Decelles III’s PhD thesis, and it was so inspiring! Both his trial lecture and defense were such excellent presentations that I have to compile my notes into a blog post to process my thoughts. In the […]

Currently reading Fawns et al. (2026) on “Identifying what our students have learned: a framework for practical assessment validation”

It is really difficult to know if students have learned what we wanted them to learn, and especially when we have to collect evidence of that learning for a valid assessment — the best we can do is look for proxies that we take to stand for bigger and more complex outcomes. But “[t]he proxies […]

Catching up on some reading on sustainability teaching

I have a bunch of articles that were recommended during our LTHEChat on teaching sustainability last year that have been sitting in a special folder, waiting for a day like today where the best thing to do (right after a dip and a looong, comfy breakfast) is to curl up on the couch and read. […]

Listening to a podcast on the validity of assessments, and on feeding two birds with one scone

I was listening to an episode of “dead ideas in teaching and learning” on my walk back from dipping this morning (now you can really see that fall has come!) and I really enjoyed it and can well imagine using as at “recommended listening” to prepare participants for workshops on AI and/or assessment.

Currently reading Gravett (2025) on “Authentic assessment as relational pedagogy”

Authentic assessment is often understood as students doing practical, real-life, mostly outside-of-university tasks (and universities are often perceived as not part of the “real world” even though they should be, as Lockard & Bloch-Schulman (2023) also discussed). Recently, authentic assessment is even sometimes called upon as the antidote to students cheating with GenAI.

Catching up on some reading about mastery-based testing and feedback

Testing drives learning, at least to some extent. Testing can also cause a lot of stress and anxiety that hinder learning. But there might be a way of testing that actually benefits learning without causing too much stress: Mastery-based testing, provided it is combined with good feedback!

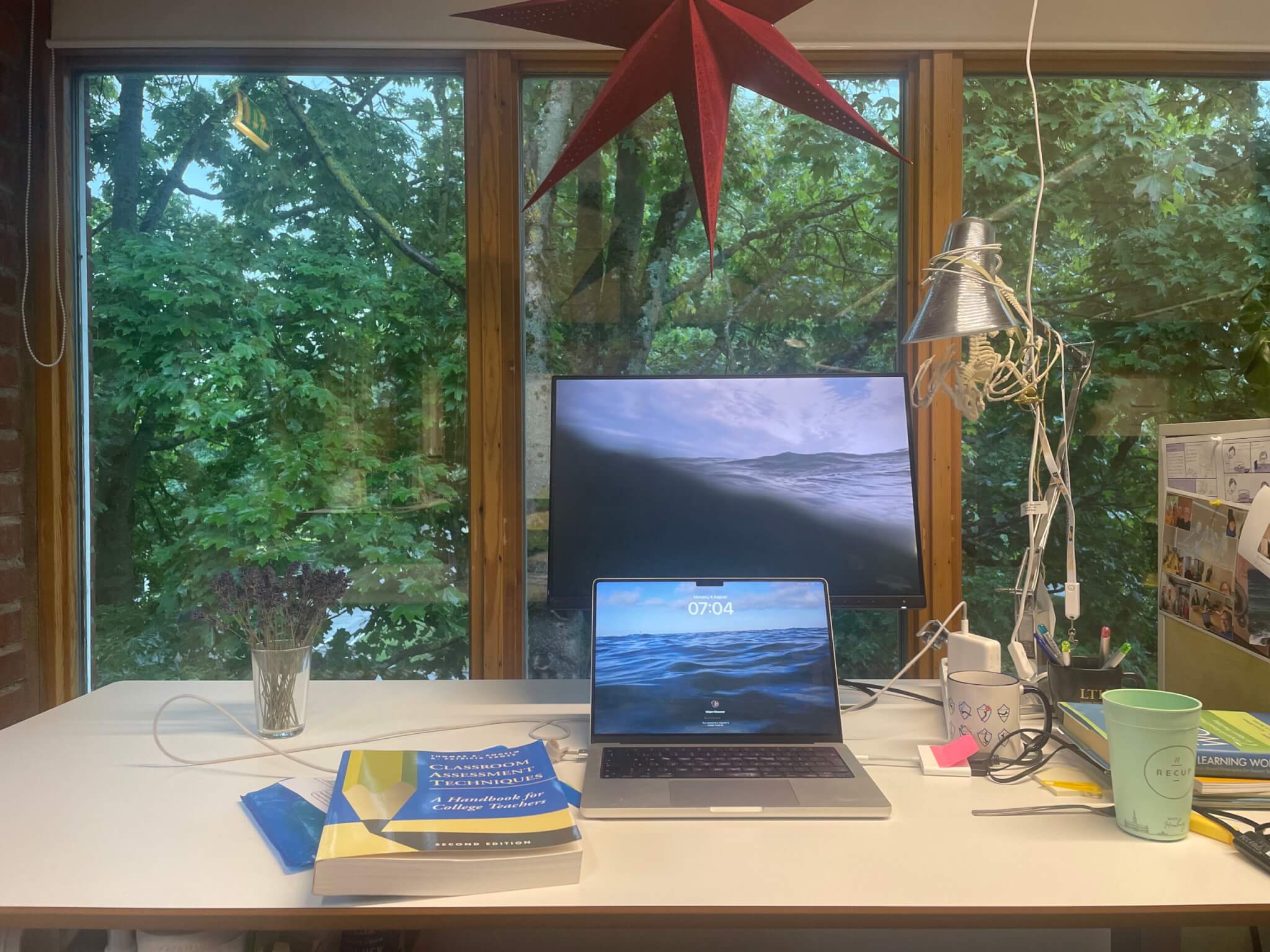

Currently reading up on Classroom Assessment Techniques

I’m currently preparing several courses and this book “Classroom Assessment Techniques” has been on my “to read during the summer” pile since last summer or so… So it was about time I moved it to my desk and dug into it! The book exists in much newer editions, this just happens to be the one […]

Currently reading Ross et al. (2024) on “Reflective writing as summative assessment in higher education: A systematic Review”

Reflective writing comes up a lot as The Solution to fix all kinds of problems related to students possibly not engaging and using GenAI to write their assignments etc.. If they had to write a reflection on their learning, they would surely have to engage, right? Wrong. Of course GenAI can write nice reflections if […]

Currently reading Carless (2009) on “Trust, distrust and their impact on assessment reform”

Since reading Macfarlane’s chapter the other day, I am thinking a lot about the influence of institutional policies (rather than teacher actions in direct contact with students) on trust. Now I am reading Carless (2009), who writes that “accountability can be a source rather than a remedy for distrust“.

Currently reading Timperley & Schick (2025): “Assessment as pedagogy: inviting authenticity through relationality, vulnerability and wonder”

When I read about “eliciting wonder and joy via invitations to see the world anew” in the abstract, I knew this article would be squeezed in before I continue with my scheduled reading list for the week. I have previously written about authentic assessment, and most recently about assessment for inclusion and distinctiveness, so this […]

Currently reading Nieminen & Boud (2025) on “Student self-assessment: a meta-review of five decades of research”

There are wildly differing understandings of what student self-assessment can and should do. It can be part of supporting the learning process in formative assessments or as a valuable skill to master in itself to support future learning, or part of measuring learning outcomes in summative assessment.

Reading on cheating and grading (Henslee et al., 2025; and Swanson et al., 2025)

I have such a backlog of wave watching pics that I really need to blog more… Two articles today, the first one on “Students’ Perceptions of Self and Peers Predict Self-Reports of Cheating” by Henslee et al. (2025). I really enjoyed reading about Ellis & Murdoch (2024)’s educational integrity enforcement pyramide recently, but that’s a […]

Reading about assessment for inclusion and distinctiveness

I have had the pdf of the article ‘There was very little room for me to be me’: the lived tensions between assessment standardisation and student diversity” by Nieminen et al. (2024) open on my computer for months, but now that I am going into a week of vacations (yay!!) it’s time to close documents, […]

Currently reading: Tai et al. (2022) on “Assessment for inclusion: rethinking contemporary strategies in assessment design”

I just read this really interesting and important article about “assessment for inclusion, which seeks to ensure diverse students are not disadvantaged through assessment practices” by Tai et al. (2022). Traditional assessment practices often discriminates against people in so many different ways that I had not really carefully considered before.

“The educational integrity enforcement pyramid: a new framework for challenging and responding to student cheating” by Ellis & Murdoch (2024)

When Rachel writes she agrees that an article is brilliant and important and a ’must read’ for anyone in higher ed involved in/concerned about academic integrity and assessment security, guess I have to read it…

Unsurprising but important research: there is a sequential bias based on order in which work is presented and then graded in learning management systems (after Wang et al., 2024)

My awesome colleague Rachel Forsyth (of our amazing “trust” paper) sent me a message saying “unsurprising but important research” and then a link to Wang et al. (2024), and that is a good summary. In a study of more than 30 million grading records in a Learning Management System, Wang et al. (2024) find that […]

Currently reading: “Sustainable assessment revisited” (Boud & Soler, 2016)

“Sustainable assessment” is about making assessment useful to learning beyond the frame of the course it is related to, not just in terms of retaining the learnt information and skills for longer, but to support future learning. Resource-intensive courses or practices might become more sustainable if they have far-reaching consequences beyond just the course, and […]

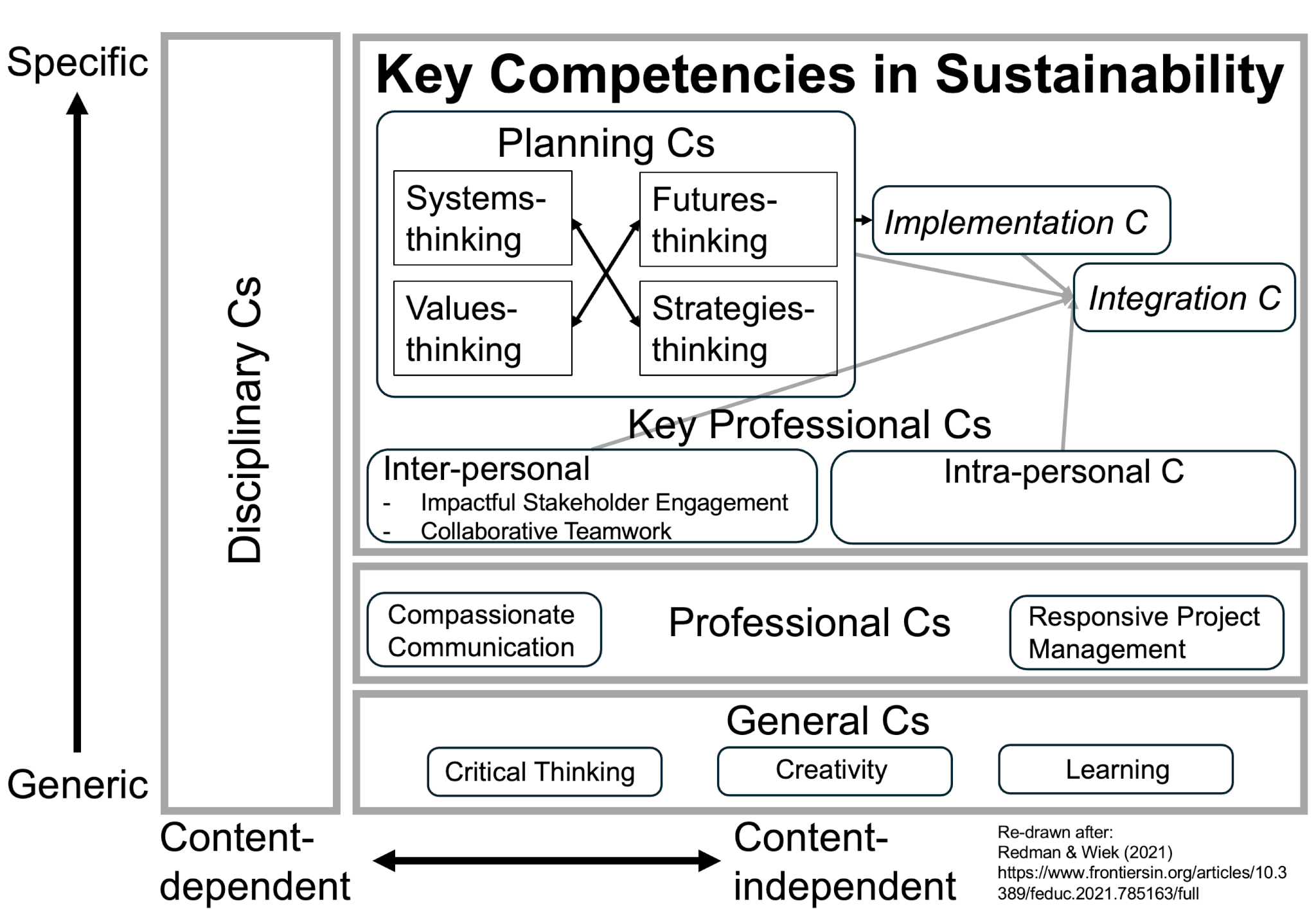

Operationalising and assessing sustainability competencies (some ideas from Wiek et al. (2016) and Redman et al. (2020))

We put a lot of effort into teaching for sustainability, but whether or not we are actually successful in doing so remains unclear until we figure out a way to operationalise learning outcomes and, obviously, ways to assess them. Below, I am summarising two articles to get a quick idea of how one might do […]

Prompt engineering and other stuff I never thought I would have to teach about

Last week, I thought a very intensive “Introduction to Teaching and Learning” course where we — like all other teachers everywhere — had to address that GAI has made many of the traditional formats of assessment hard to justify. We had to come up both with guidelines for the participants in our course on how […]

Currently reading: “The impact of grades on student motivation” (Chamberlin et al., 2023)

An argument that I encounter a lot is that student assignments need to be graded in order for students to put in any effort at all. But is that true? In the literature, grades have been connected to stress and anxiety for students, more cheating, less cooperation, less thinking, less trust — so ultimately less […]

Currently reading: “Teaching with rubrics: the good, the bad, and the ugly” (Andrade, 2005)

Doing my reading for the monthly iEarth journal club… Thanks for suggesting yet another interesting article, Kirsty! This one is “Teaching with rubrics: the good, the bad, and the ugly” (Andrade, 2005) — a great introduction on how to work with rubrics (and only 2.5 pages of entertaining, easy-to-read text, plus an example rubric). My summary […]

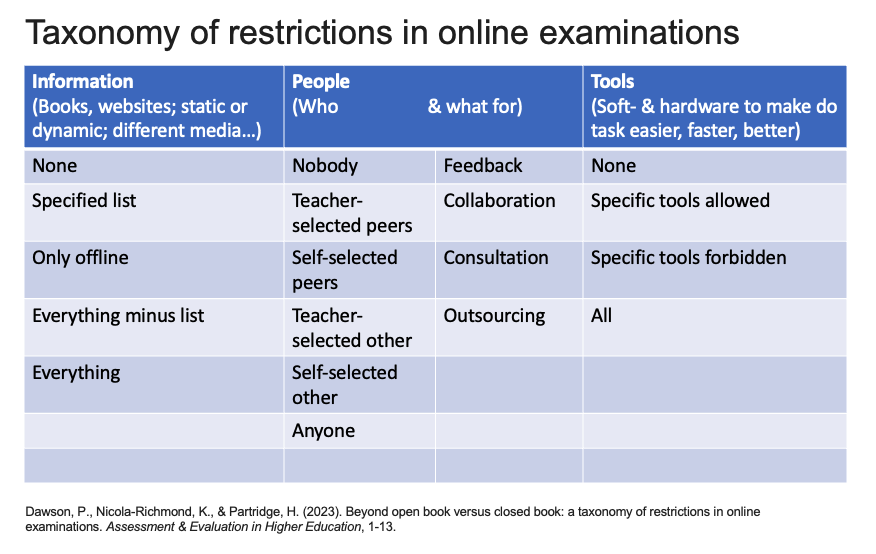

Currently reading: “Beyond open book versus closed book: a taxonomy of restrictions in online examinations.” by Dawson, Nicola-Richmond, & Partridge (2023)

If we want to do a valid assessment of what a specific student can do, we need to know what information they had available when producing the artifact we are evaluating, who they could communicate with, and what tools they had access to. And we might want to restrict access to some or all of […]

“Mandatory coursework assignments can be, and should be, eliminated!” currently reading Haugan, Lysebo & Lauvas (2017)

The claim in this article’s title, “Mandatory coursework assignments can be, and should be, eliminated!”, is quite a strong one, and maybe not fully supported by the data presented here. But the article is nevertheless worth a read (and the current reading in iEarth’s journal club!), because the arguments supporting that claim are nicely presented.

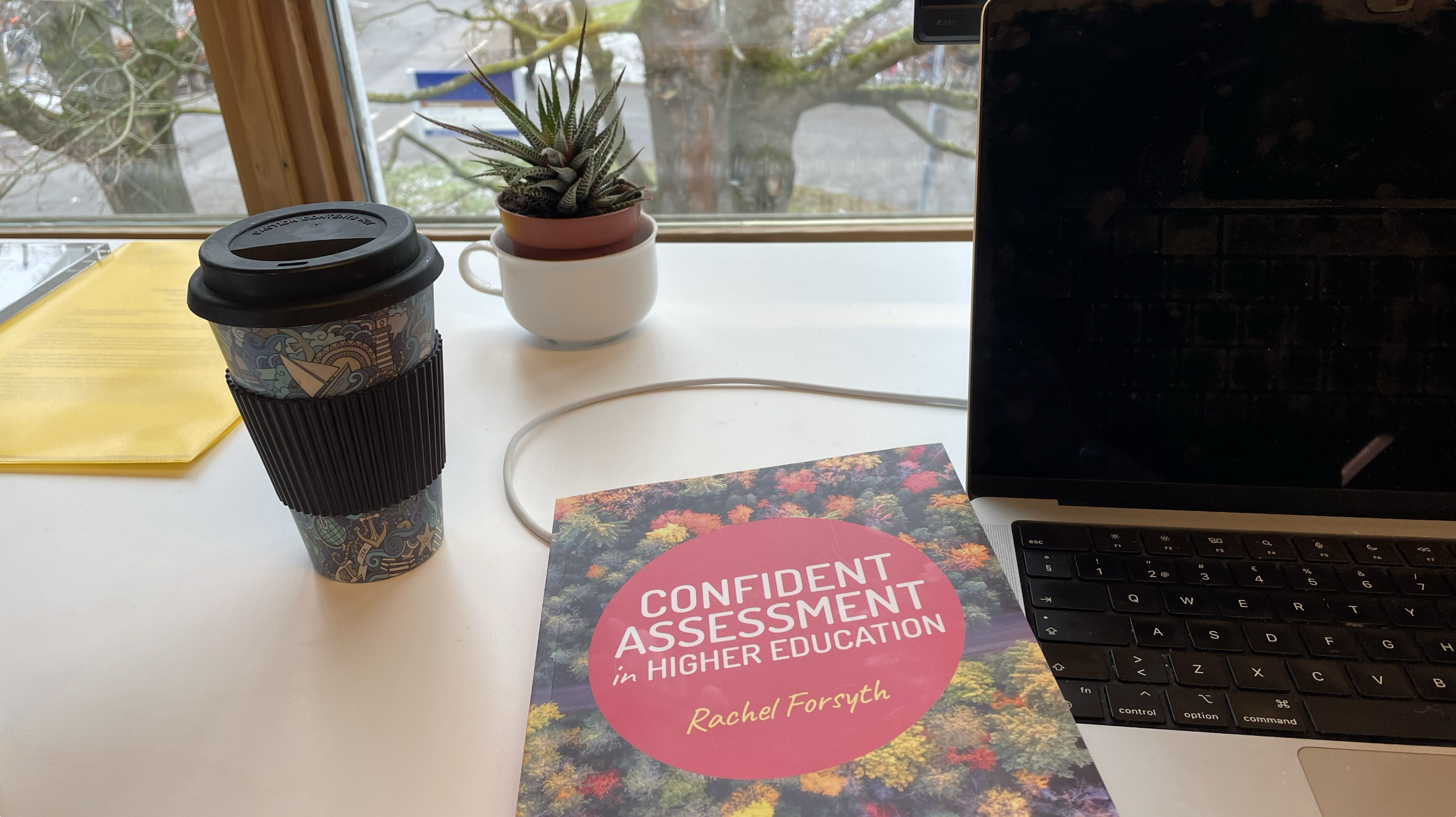

“Confident Assessment in Higher Education”, by Rachel Forsyth (2023)

I am so lucky to work with so many inspiring colleagues here at Lund University, and today I read my awesome colleague Rachel Forsyth’s new book on “confident assessment in higher education” (Forsyth, 2023). It is a really comprehensive introduction to assessment and totally worth a read, as an introduction to assessment or even just […]

Eight criteria for authentic assessment; my takeaways from Ashford-Rowe, Herrington & Brown (2014)

“Authentic assessment” is a bit of a buzzword these days. Posing assessment tasks that resemble problems that students would encounter in the real world later on sounds like a great idea. It would make learning, even learning “for the test”, so much more relevant and motivating, and it would prepare students so much better for […]

Reducing bias and discrimination in teaching: an annotated, incomplete — WORK IN PROGRESS! — list of references

Already at the time of posting, I have added to my to-read list for an updated version of this post. Please let me know of any additional literature I should include, and of any other comments you might have! As it says in the title, this work is incomplete and in progress! “The rights perspective, […]

Using peer feedback to improve students’ writing (Currently reading Huisman et al., 2019)

I wrote about involving students in creating assessment criteria and quality definitions for their own learning on Thursday, and today I want to think a bit about involving students also in the feedback process, based on an article by Huisman et al. (2019) on “The impact of peer feedback on university students’ academic writing: a Meta-Analysis”. In that […]

Co-creating rubrics? Currently reading Fraile et al. (2017)

I’ve been a fan of using rubrics — tables that contain assessment criteria and a scale of quality definitions for each — not just in a summative way to determine grades, but in a formative way to engage students in thinking about learning outcomes and how they would know when they’ve reached them. Kjersti has […]

Letting students choose the format of their assessment

I just love giving students choice: It instantly makes them more motivated and engaged! Especially when it comes to big and important tasks like assessments. One thing that I have great experience with is letting students choose the format of a cruise or lab report. After all, if writing a classical lab report isn’t a […]

Assessing participation

One example of how to give grades for participation. One of the most difficult tasks as a teacher is to actually assess how much people have learned, along with give them a grade – a single number or letter (depending on where you are) that supposedly tells you all about how much they have learnt. Ultimately, what […]