How do we measure whether teaching interventions really do what they are supposed to be doing? (Spoiler alert: In this post, I won’t actually give a definite answer to that question, I am only talking about a paper I read that I found very helpful, and reflecting on a couple of ideas I am currently pondering. So continue reading, but don’t expect me to answer this question for you! :-))

As I’ve talked about before, we are currently working on a project where undergraduate mathematics and mechanics teaching are linked via online practice problems. Now that we are implementing this project, it would be very nice to have some sort of “proof” of its effectiveness.

My (personal) problem with control group studies

Control group studies are likely the most common way to “scientifically” determine whether a teaching intervention had the desired effect. This has rubbed me the wrong way for some time — if I am so convinced that I am improving things, how can I keep my new and improved course from half of the students that I am working to serve? Could I really live with myself if we, for example, measured that half of the students in the control group dropped out within the first three or four weeks of our undergraduate mathematics course, while of the experimental group, only much fewer students dropped out, and much later in the semester? On the other hand, if our intervention had such a large effect, shouldn’t we be measuring it (at least once) in a classical control group study, so we know for sure what its effect is, in order to convince stakeholders at our and other universities that our intervention should be adopted everywhere? If the intervention really improves this much, everybody should see the most compelling evidence so that everybody starts adopting the intervention, right?

A helpful article

Looking for answers to the questions above, I asked Nicki for help, and she pointed me to a presentation by Nick Tilley (2000), that I found really eye-opening and helpful for framing those questions differently, and starting to find answers. The presentation is about evaluation in a social sciences context, but easily transferable to education research.

In this presentation, Tilley first places the proposed method of “realistic evaluation” in the larger context of philosophy of science. For example Popper (1945) suggests using small-scale interventions to deal with specific problems instead of large interventions that address everything at once, and points to the opportunities to investigate the extent to which the theories (on which those small-scale interventions were built) can be tested and improved. Similarly, Campbell (1999) talks about “reforms as experiments”. So the “realistic evaluation” paradigm has been around for a while, partly in conflict with how we do science “conventionally”.

Reality is too complex for control group studies

Then, Tilley talks about classical methods, specifically control group experiments, and argues that — in contrast to what is portrayed in washing detergent ads, for example — studys are typically too complex to directly transfer results between different contexts. In contrast to what science typically does, we are also not investigating a law of nature, where the goal is to understand a mechanism causing a regularity in a given context. Rather, we are investigating how we can cause a change in a regularity. This means we are asking the question “what works for whom in what circumstances?”. With our intervention, we might be introducing different mechanisms, triggering a change in balance of several mechanisms, and hence change the regularities under investigation (which, btw, is our goal!) — all by changing the context.

The approach for evaluations of interventions should therefore, according to Tilley, be “Context Mechanism Outcome Configurations” (CMOC), which describe the interactions between context, mechanism and outcome. In order to create such a description, one needs to clearly describe the mechanisms (“what is it about a measure which may lead it to have a particular outcome pattern in a given context?”), context (“what conditions are needed for a measure to trigger mechanisms to produce particular outcome patterns?”), outcome pattern (“what are the practical effects produced by causal mechanisms being triggered in a given context?” and this finally leads to CMOCs (“How are changes in regularity (outcomes) produced by measures introduced to modify the context and balance of mechanisms triggered?”).

Impact of CCTV on car crimes — a perfect example for control group studies?

Tilley gives a great example for how this works. Investigating how CCTV affects rates of car crimes seems to be easily measured by a classical control group setup. Just install the cameras and compare their crime rates with those of parking spaces without cameras! However, once you start thinking about mechanisms through which the CCTV cameras could influence crime rates, there are lots of different possible mechanisms. There are eight named explicitly in the presentation, for example offenders could be caught thanks to CCTV and go to jail, hence crime rates would sink. Or, criminals might not choose to commit crimes, because the risk of being caught increased due to CCTV, which would again result in lower crime rates. Or people using the car park might feel more secure in using it and therefore start using it more, making it busier at previously less busy times, making car theft more difficult and risky, leading to sinking crime rates.

But then, we also need to think about context, and how car parks and car park crimes potentially differ. For example, crime rate can be the same whether there are a few very active criminals, or many not as busy ones. So catching the similar number of offenders might have a different effect, depending on context. Or the pattern of usage of car parks might depend on working hours of people working close by. So if the dominant CCTV mechanism would be to increase confidence in usage, this would not really help because the busy hours are dedicated by people’s schedules, not how safe they feel. If this would lead to higher usage, however, more cars being around might mean more car crimes because there are more opportunities, yet still a decreased crime rate per use. Another context would be that thieves might just look for new targets outside of the one car park that is now equipped with CCTV, thereby just displacing the problem elsewhere. And there are a couple more contexts mentioned in the presentation.

Long story short: Even for a relatively simple problem (“how does CCTV affect car crime rate?”), there is a wide range of mechanisms and contexts which will all have some sort of influence. Just investigating one car park with CCTV and a second one without will likely not lead to results that help solve the car crime issue once and for all everywhere. First, theories of what exactly the mechanisms and contexts are for a given situation need to be developed, and then other methods of investigation are needed to figure out what exactly is important in any given situation. Do people leave their purses sitting out visibly in the same way everywhere? How are CCTV cameras positioned relative to the cars being stolen? Are usage pattern the same in two car parks? All of this and more needs to be addressed to sort out which of the context-mechanism theories above might be dominant at any given car park.

Back to mathematics learning and our teaching intervention

Let’s get back to my initial question that, btw, is a lot more complex than the example given in the Tilley-presentation. How can we know whether our teaching intervention is actually improving anything?

Mechanisms at play

First, let’s think about possible mechanisms at play here. “What is it about a measure which may lead it to have a particular outcome pattern in a given context?” Without claiming that this is a comprehensive list, here are a couple of ideas:

a) students might realize that they need mathematics to work on mechanics problems, increasing their motivation to learn mathematics

b) students might have more opportunity to receive feedback than before (because now the feedback is automated), and more feedback might lead to better learning

c) students might appreciate the effort made by the instructors, feel more valued and taken seriously, and therefore be more motivated to put in effort

d) students might prefer the online setting over classical settings and therefore practice more

e) students might have more opportunity to practice because of the flexibility in space and time given by the online setting, leading to more learning

f) students might want to earn the bonus points they receive for working on the practice problems

g) students might find it easier to learn mathematics and mechanics because they are presented in a clearer structure than before

Contexts

Now contexts. “What conditions are needed for a measure to trigger mechanisms to produce particular outcome patterns?” Are all students and all student difficulties with mathematics the same? (Again, this is just a spontaneous brain storm, this list is nowhere near comprehensive!)

– if students’ motivation to learn mathematics increased because they see that they will need it for other subjects (a), this might lead to them only learning those topics where we manage to convey that they really really need them, and neglecting all the topics that might be equally important but where we, for whatever reasons, just didn’t give as convincing an example

– if students really value feedback this highly (b), this might work really well, or there might be better ways to give personalised feedback

– if students react to feeling more valued by the instructor (c), this might only work for the students who directly experienced a before/after when the intervention was first introduced. As soon as the intervention has become old news, future cohorts won’t show the same reaction any more. It might also only work in a context where students typically don’t feel as valued so that this intervention sticks out

– if students prefer the online setting over classical settings generally (d), or appreciate the flexibility (e), this might work for us while we are one of the few courses offering such an online setting. But once other courses start using similar settings, we might be competing with others, and students might spend less time with us and our practice problems again

– if students mainly work for the bonus points (f), their learning might not be as sustainable as if they were intrinsically motivated. And as soon as there are no more bonus points to be gained, they might stop using any opportunity for practice just for practice’s sake

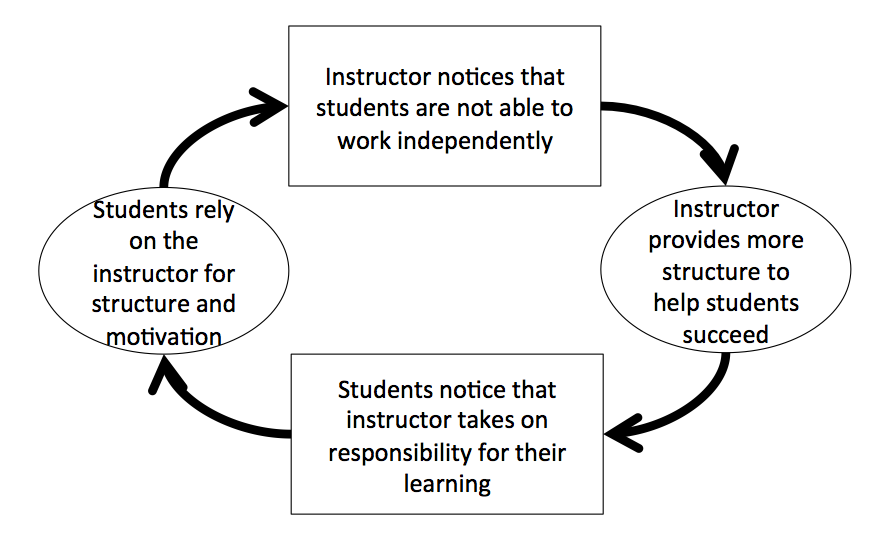

– providing students a structure (g) might make them depend on it, harming their future learning (see my post on this Teufelskreis).

Outcome pattern

Next, we look at outcome patterns: “what are the practical effects produced by causal mechanisms being triggered in a given context?”. So which of the mechanisms identified above (and possibly others) seem to be at play in our case, and how do they balance each other? For this, we clearly need a different method than “just” measuring the learning gain in an experimental group and compare it to a control group. We need a way to identify the mechanisms at play in our case, and those that are not. We then need to figure out the balance of those mechanisms. Is the increased interest in mathematics more important than students potentially being put off by the online setting? Or is the online setting so appealing that it compensates for the lack of interest in mathematics? Can we show students that we care about them without rolling out new interventions every semester, and will that motivate them to work with us? Do we really need to show the practical application of every tiny piece of mathematics in order for students to want to learn it, or can we make them trust us that we are only teaching what they will need, even if they aren’t yet able to see what they will need it for?

This is where I am currently at. Any ideas of how to proceed?

CMOCs

And finally, we have reached the CMOCs (“How are changes in regularity (outcomes) produced by measures introduced to modify the context and balance of mechanisms triggered?”). Assuming we have identified the outcome patterns, we would need to figure out how to change those outcome patterns, either by changing the context, or by changing the balance of mechanisms being triggered.

After reading this article and applying the concept to my project (and I only read the article today, so my thoughts will hopefully evolve some over the next couple of weeks!), I feel that the control group study that everybody seems to expect from us is not as valid as most people might think. As I said above, I don’t have a good answer yet for what we should do instead. But I found it very eye-opening to think about evaluations in this way and am confident that we will figure it out eventually! Luckily we have only run a small-scale pilot at this point, and there is still some time before we start rolling out the full intervention.

What do you think? How should we proceed?