Currently reading Lodge et al. (2023) on “It’s not like a calculator, so what is the relationship between learners and generative artificial intelligence?”

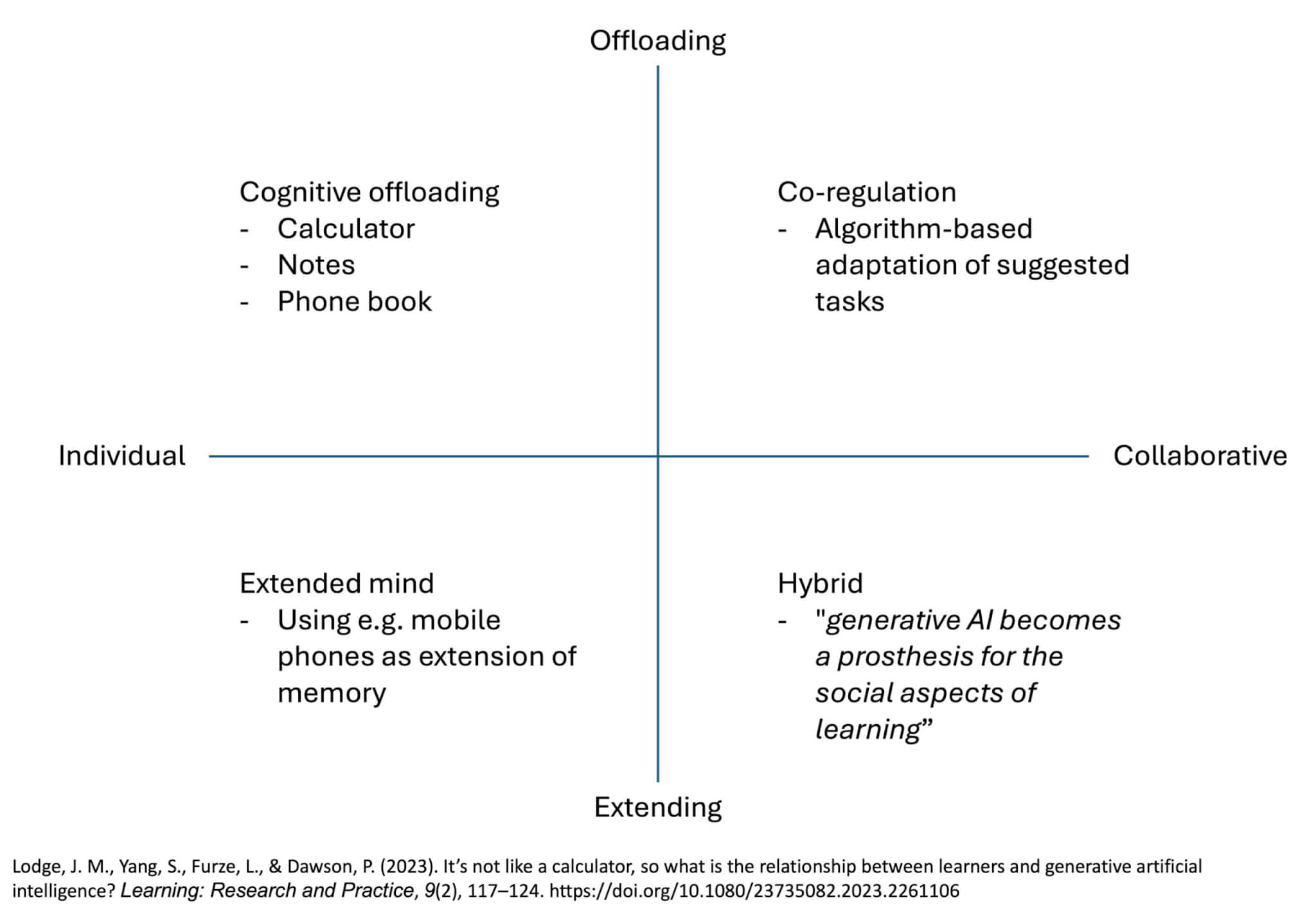

Intriguing title! Lodge et al. (2023) discuss a “quadrant typology of human and machine interactions for education” with the two axes individual-to-collaborative and extending-to-offloading. Calculators fall into “cognitive offloading“, meaning it’s individual and offloading.

They explain cognitive offloading, writing “At the core of these approaches is that basic

cognitive functions are subcontracted out to technology, ostensibly freeing up capacity

for other mental tasks“. Another example is taking notes so that some information does not need to be actively remembered all the time. Does anyone remember the little phone books where you keep the phone numbers you don’t want to memorize? But that also meant that most of those numbers were never memorized… So while cognitive offloading can be super useful, it should also only be used knowing the drawbacks, and potentially be scaffolded to make sure important skills are acquired before (partly) being offloaded later (like multiplication tables).

Still on an individual level but now going into extending rather than offloading, the “extended mind” quadrant is really that — in the example they give it’s like the relationship some people (me!) have with their mobile phones, where “rather than offloading the storage, the phone becomes an integrated part of their memory, extending it” (and there are obvious dangers in that approach…). If AI is used as extended mind, the idea is that more becomes possible than would be for either the human or the AI alone.

In the collaborative-offloading quadrant, we have “co-regulation of learning“, where the learning is regulated by both humans and an adaptive learning tool together, possibly by an algorithm detecting stuff and thus changing how/which content and tasks are presented to the learner. The discussion of this point reads very 2023; likely the capabilities of AI would be described differently by now. But in any case, “[h]uman learners need to self-monitor their learning goals and states, continuously evaluate the AI responses, and adapt their own learning strategies or prompts to AI.” (and at least in Poulidis et al. (2025) they found that for many learners, adapting their own learning strategy was to wish that there was no “support” from AI).

Lastly, in collaboration-extending, “hybrid learning” is “an educational approach where generative AI systems work in conjunction with human learners to promote both cognitive and metacognitive aspects of learning“, where “generative AI becomes a prosthesis for the social aspects of learning“. Sounds terrifying, actually! But on the other hand, as kids, we used to have a “little professor” solar-powered game thing, where you could practice doing easy maths tasks, and the very pixelated “professor” would happy and approvingly wriggle their eyebrows whenever you got something right, and after five tasks (I think), there were stars dancing on the display. Did that give us some kind of prosthesis for the social aspect of learning? Here we were clearly very aware that we were not interacting with an intelligence of some sort…

Anyway, this typology is an interesting way to try to look at AI and — similarly to trying to de-antropomorphize AI — make the discussion a bit more nuanced and more precise in what AI can and cannot do, and what we want to use it for and what not. Which seems very needed in times of polarization…

Lodge, J. M., Yang, S., Furze, L., & Dawson, P. (2023). It’s not like a calculator, so what is the relationship between learners and generative artificial intelligence? Learning: Research and Practice, 9(2), 117–124. https://doi.org/10.1080/23735082.2023.2261106